Part 6: Converting and Freezing our CNN

Now we have built a more optimal CNN by handling both under-fitting and over-fitting, we can begin the process of deploying our model on the FPGA itself. The first step in this process is to convert our Keras model to a TensorFlow model and then we need to freeze it.

- Introduction

- Getting Started

- Transforming Kaggle Data and Convolutional Neural Networks (CNNs)

- Training our Neural Network

- Optimising our CNN

- Converting and Freezing our CNN (Current)

- Quanitising our CNN

- Compiling our CNN

- Running our code on the DPU

- Conclusion Part 1: Improving Convolutional Neural Networks: The weaknesses of the MNIST based datasets and tips for improving poor datasets

- Conclusion Part 2: Sign Language Recognition: Hand Object detection using R-CNN and YOLO

The Sign Language MNIST Github

The Theory of Freezing

Back in Part 4 we mentioned the difference between inference and training and that due to the far less memory intensive nature of inference, it was far more desirable to run that phase on FPGAs. We move models from the training phase to the inference phase through freezing. Freezing is not just a matter of locking the weights, so they no longer change. There are layers we introduced that have distinctly different behaviors in inference than in training.

Batch Normalization

As explained in Part 5, Batch Normalization zero-centers and normalizes any inputs, then scales and shifts, but to do this requires us to know the mean and standard deviation. In the training phase, we simply can figure out the mean and standard deviation over the current batch, but in inference we may not have any batches to produce this or the batches may not be independent and identically distributed. In inference instead what we can use is the moving average of the means and standard deviations.

Dropout

Dropout is when we randomly drop neurons during the training phase to stop overfitting, but randomly dropping neurons during inference is not a good idea so dropout does not occur during inference. This is not the end of the story because on average during training each neuron has had a percentage (equal to the drop rate) of its inputs removed. This means that during inference each neuron will be receiving more signals on average, but we can counter this effect by multiplying each connection weight by 1-drop rate after training has finished. This means that the weights used in inference are actually a scaled version of the training model.

Keras to TensorFlow

Once we have finished training, we need to save our Keras model which consists of:

- The architecture of the model (i.e. the order of our layers)

- The training settings (i.e. the optimizer, decay rate, learn rate)

- The parameters we have just trained (i.e. our weights, the scale and shift values of the batch normalization)

- Our metrics (i.e. our loss and accuracy)

This is all saved to the Keras H5 format:

model.save(os.path.join('./train','keras_trained_model.h5'))main.py handles the conversion of our Keras H5 model to TensorFlow. More specifically we call the function:

keras2tf('train/keras_trained_model.h5', 'train/tfchkpt.ckpt', 'train')backend.set_learning_phase(0)

This tells Keras to switch our layers to inference mode. We then also need to setup saving the model:

# set up tensorflow saver object saver = tf.train.Saver() # fetch the tensorflow session using the Keras backend tf_session = backend.get_session() # get the tensorflow session graph input_graph_def = tf_session.graph.as_graph_def() # get the TensorFlow graph path, flilename and file extension tfgraph_path = './train' tfgraph_filename = 'tf_complete_model.pb' # write out tensorflow checkpoint & inference graph for use with freeze_graph script saver.save(tf_session, tfckpt) tf.train.write_graph(input_graph_def, tfgraph_path, tfgraph_filename, as_text=False)

- tfchkpt.ckpt.meta This file contains the CNN architecture in several different data structures such as GraphDef, separate from any weights, metrics or settings

- tfchkpt.ckpt.data-00000-of-00001 This is where the training settings, parameters and metrics are held

- tfchkpt.ckpt.index This file essentially is a table containing a tensor and the meta-data pertaining to that tensor

- checkpoint Contains information on any checkpoint used

Finally, we write the graph to a .pb which is a binary file or .pbtxt file which is a human readable file. These files contain the series of nodes that describe our CNN architecture. This is slightly different to the .meta file as the .meta file contains a series of data structures, whereas .pb files only contain the CNN architecture in the node format.

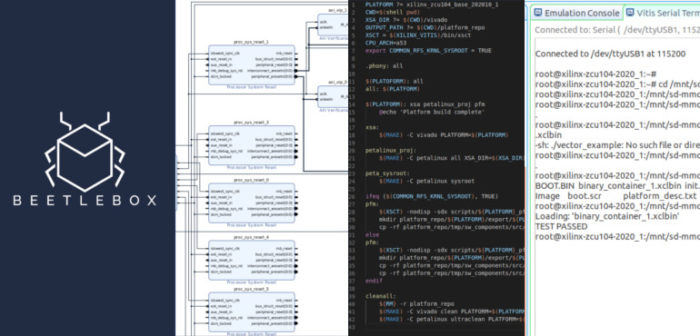

Freezing our graph

Now we have converted our Keras file into multiple TensorFlow files. Having this multi file format is useful if we want to perform more training but not so useful for deployment. TensorFlow ties the model into a single file for execution as well as removes any nodes that are not used for inference using the freeze_graph script, which is automatically installed. We get to finally run something from the command line that is not main.py! In the command line run:

freeze_graph --input_graph=./train/tf_complete_model.pb \ --input_checkpoint=./train/tfchkpt.ckpt \ --input_binary=true \ --output_graph=./freeze/frozen_graph.pb \ --output_node_names=activation_6_1/Softmax

The freeze graph script takes our .pb file and combines it with the data held in tfchkpt.ckpt into the single file frozen_graph.pb. It also converts any variables into constants:

INFO:tensorflow:Froze 24 variables. I0723 04:46:52.620175 139954184902464 graph_util_impl.py:334] Froze 24 variables. INFO:tensorflow:Converted 24 variables to const ops.

We now just need to check that freezing our model has not caused any significant variation in our accuracy which we can do by running:

python3 evaluate_accuracy.py \ --graph=./freeze/frozen_graph.pb \ --input_node=input_1_1 \ --output_node=activation_6_1/Softmax \ --batchsize=32

This will run our frozen TensorFlow model so we can ensure our accuracy has not changed. For this particular run our training accuracy was:

Accuracy: 0.970

The accuracy of a frozen model is:

Graph accuracy with validation dataset: 0.9704

So we do have a slight variation, but it has turned out to work in our favour as can happen in deep learning.

In this tutorial, we have explored how to change a training model to a inference model through freezing. We first began by converting our model from Keras to a TensorFlow model and then placed the multiple files created by TensorFlow into a single frozen model. This model is now ready to be optimized by Vitis AI through quantization, which we will explore next time.

Hi, Thank-you for this awesome tutorial. I got an error while running freeze_graph, the error is:

Traceback (most recent call last):

File “/opt/vitis_ai/conda/envs/vitis-ai-tensorflow/bin/freeze_graph”, line 10, in

sys.exit(run_main())

File “/opt/vitis_ai/conda/envs/vitis-ai-tensorflow/lib/python3.6/site-packages/tensorflow_core/python/tools/freeze_graph.py”, line 487, in run_main

app.run(main=my_main, argv=[sys.argv[0]] + unparsed)

File “/opt/vitis_ai/conda/envs/vitis-ai-tensorflow/lib/python3.6/site-packages/tensorflow_core/python/platform/app.py”, line 40, in run

_run(main=main, argv=argv, flags_parser=_parse_flags_tolerate_undef)

File “/opt/vitis_ai/conda/envs/vitis-ai-tensorflow/lib/python3.6/site-packages/absl/app.py”, line 299, in run

_run_main(main, args)

File “/opt/vitis_ai/conda/envs/vitis-ai-tensorflow/lib/python3.6/site-packages/absl/app.py”, line 250, in _run_main

sys.exit(main(argv))

File “/opt/vitis_ai/conda/envs/vitis-ai-tensorflow/lib/python3.6/site-packages/tensorflow_core/python/tools/freeze_graph.py”, line 486, in

my_main = lambda unused_args: main(unused_args, flags)

File “/opt/vitis_ai/conda/envs/vitis-ai-tensorflow/lib/python3.6/site-packages/tensorflow_core/python/tools/freeze_graph.py”, line 378, in main

flags.saved_model_tags, checkpoint_version)

File “/opt/vitis_ai/conda/envs/vitis-ai-tensorflow/lib/python3.6/site-packages/tensorflow_core/python/tools/freeze_graph.py”, line 361, in freeze_graph

checkpoint_version=checkpoint_version)

File “/opt/vitis_ai/conda/envs/vitis-ai-tensorflow/lib/python3.6/site-packages/tensorflow_core/python/tools/freeze_graph.py”, line 190, in freeze_graph_with_def_protos

var_list=var_list, write_version=checkpoint_version)

File “/opt/vitis_ai/conda/envs/vitis-ai-tensorflow/lib/python3.6/site-packages/tensorflow_core/python/training/saver.py”, line 828, in __init__

self.build()

File “/opt/vitis_ai/conda/envs/vitis-ai-tensorflow/lib/python3.6/site-packages/tensorflow_core/python/training/saver.py”, line 840, in build

self._build(self._filename, build_save=True, build_restore=True)

File “/opt/vitis_ai/conda/envs/vitis-ai-tensorflow/lib/python3.6/site-packages/tensorflow_core/python/training/saver.py”, line 878, in _build

build_restore=build_restore)

File “/opt/vitis_ai/conda/envs/vitis-ai-tensorflow/lib/python3.6/site-packages/tensorflow_core/python/training/saver.py”, line 482, in _build_internal

names_to_saveables)

File “/opt/vitis_ai/conda/envs/vitis-ai-tensorflow/lib/python3.6/site-packages/tensorflow_core/python/training/saving/saveable_object_util.py”, line 343, in validate_and_slice_inputs

for converted_saveable_object in saveable_objects_for_op(op, name):

File “/opt/vitis_ai/conda/envs/vitis-ai-tensorflow/lib/python3.6/site-packages/tensorflow_core/python/training/saving/saveable_object_util.py”, line 206, in saveable_objects_for_op

variable, “”, name)

File “/opt/vitis_ai/conda/envs/vitis-ai-tensorflow/lib/python3.6/site-packages/tensorflow_core/python/training/saving/saveable_object_util.py”, line 83, in __init__

self.handle_op = var.op.inputs[0]

File “/opt/vitis_ai/conda/envs/vitis-ai-tensorflow/lib/python3.6/site-packages/tensorflow_core/python/framework/ops.py”, line 2154, in __getitem__

return self._inputs[i]

IndexError: list index out of range

can you please help me?

Hi daivik,

Which version of Vitis-ai are you running? Just from the logs it looksn like something is going wrong with the input nodes, so I would investigate that to begin with.

Hey Daivik .I got the same error. Have you already solved it? Can you please help me?

Hey Kaphwan, I’m facing the same error and I’m using recent version of vitis-ai.

Can you please help me if you have resolved it.

From my side aswell – warmest thanks for the great tutorial!

I am facing the same problem as all the comments above, has anyone found a solution?